AI can help teams ship backend logic faster, no surprises there. The problem is that speed without validation turns compliance into a cleanup exercise. A visual governance layer changes that.

AI-assisted development has officially moved from "interesting experiment" to "part of the workflow." GitHub has said Copilot was already writing 46% of users' code in some contexts, and developer-speed gains are real. But faster code generation does not eliminate the need for human review, secure testing, or compliance controls. It just means teams now need a better way to govern a much larger volume of logic.

When AI starts generating backend workflows, API logic, and business rules, the bottleneck is no longer writing code. It is validating whether the code is correct, secure, testable, and defensible during an audit. AI can build quickly, but humans still need a visual, governed way to inspect, validate, and promote what actually runs.

Risks of shipping AI-generated code without proper validation

AI-generated code can fail in subtle, plausible, and annoyingly hard-to-spot ways. The output may be syntactically valid while still being behaviorally wrong: the wrong approval path, the wrong access rule, the wrong data flow, the wrong assumption about edge cases. That is exactly the kind of issue that slips past teams when everyone is moving quickly and the code "looks fine." OWASP warns that neglecting to validate LLM outputs can create downstream security exploits, including code execution and data exposure.

From a compliance perspective, this is where the real pain starts. Security and compliance failures are not always dramatic breaches with intense movie-trailer music. Sometimes they are much less glamorous: an endpoint exposing too much data, a workflow bypassing an approval step, missing evidence of review, or business logic that no longer matches policy. Great. These are exactly the kinds of failures that create audit findings, policy exceptions, and long Slack threads nobody enjoys.

Why manual validation becomes a delivery tax

In theory, teams can manually review and test AI-generated code without a validation or governance layer. In practice, that approach scales poorly. Traditional code review was designed for human-written code in human-sized pull requests. Xano makes this point explicitly: line-by-line review does not scale when AI is generating entire backends across endpoints, data models, and workflows.

That creates a nasty operational tradeoff. Teams either slow down delivery so senior developers can inspect everything manually, or they move quickly and accept more ambiguity around what the system is actually doing. Neither option is especially inspiring. Manual validation also makes compliance a labor-intensive process. Engineers wind up acting as full-time translators between raw code, business intent, and audit evidence. That is not a great use of expensive technical talent, and it does not get easier as AI-generated logic spreads across more teams, more services, and more release cycles. NIST's Secure Software Development Framework emphasizes integrating secure development practices throughout the SDLC rather than treating security verification as an afterthought. The more output AI produces, the more painful that becomes if review remains mostly manual.

How AI code validation and governance can help

A validation and governance layer sits between AI-generated output and production deployment. Its job is not to replace developers. Its job is to make AI-generated logic inspectable, testable, reviewable, and governable before it becomes business-critical behavior.

In Xano's model, that governance layer has a few practical parts.

- First, logic is generated within standardized, opinionated backend patterns so APIs and workflows follow a predictable structure.

- Second, teams can visually validate the generated logic rather than relying solely on raw code inspection.

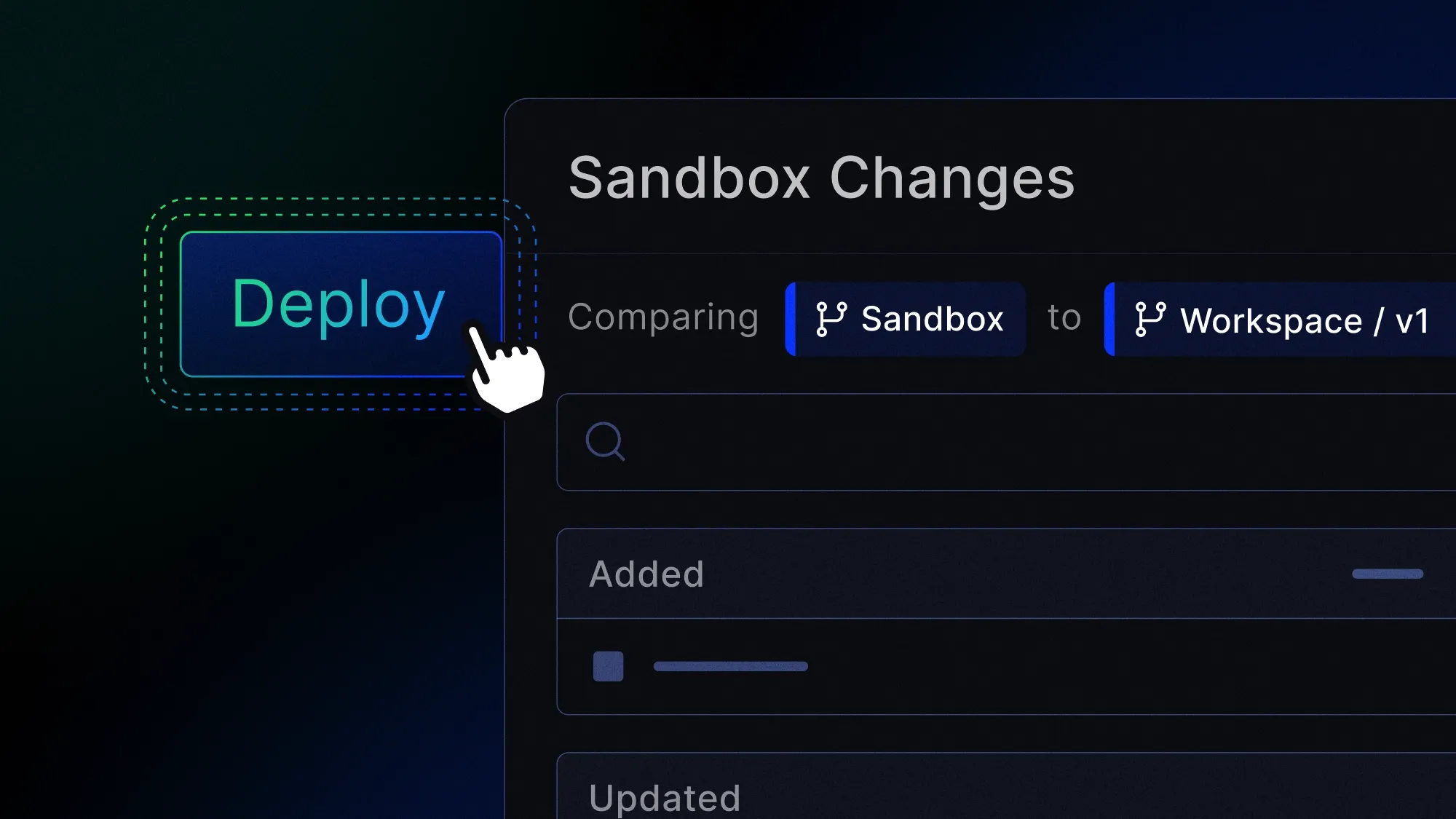

- Third, changes can be tested in isolated environments before they are promoted.

- And fourth, teams maintain visibility into business logic through audit trails and standardized architecture.

This combination is the difference between "AI wrote something" and "we can actually understand, validate, and trust what is running."

This is also why a governance layer can accelerate build times rather than slow them down. Without one, every AI-generated change creates more hidden review debt. With one, review becomes more structured and repeatable. Developers can move quickly because they are not re-deriving system intent from raw output every time. Team leads can validate behavior at the logic level. Non-technical stakeholders can participate more easily when needed. And testing moves into a defined generate → validate → sandbox → promote workflow instead of becoming a heroic last-minute scramble. Xano explicitly positions this as a way to let humans review AI-generated logic without reading every line of code and to test AI-generated changes in isolated environments before they touch production.

The compliance benefit: faster evidence, cleaner controls, fewer surprises

The biggest compliance advantage of a validation and governance layer is not just that it helps catch bugs. It is that it makes control enforcement visible and repeatable. If you're a compliance nerd like me, you know this is music to your ears!

Compliance frameworks rarely care that your team moved fast. They care whether sensitive data was handled correctly, whether access controls were enforced, whether changes were reviewed, whether environments were separated appropriately, and whether your process can be demonstrated after the fact. Xano emphasizes visual validation, audit trails, standardized architecture, RBAC, isolated testing environments, and backend controls that support enterprise-grade delivery. Those are governance mechanics, not just convenience features.

That matters because compliance work is often slowed by limited visibility, not by effort. Teams may have tested something, but don't have the best ways to prove what changed. They may have intended to enforce controls, but cannot easily show where the logic lives or how it behaves. They may have strong developers, but still lack a durable review model once AI starts generating more of the application. A visual validation layer helps turn backend behavior into something teams can inspect and explain, which is a much better place to be when security, legal, or an auditor comes asking questions.

Why standardized validation scales better across teams

The compliance challenge grows as AI-assisted development spreads beyond a single team. What feels manageable in a single project becomes chaos when multiple developers, products, or departments are all generating and shipping backend logic in slightly different ways. A governance layer creates consistency across that sprawl. It gives teams shared review patterns and a more standardized way to validate what AI produces before it goes live. That consistency matters because a big piece of the compliance puzzle is when teams follow a consistent process through and through. Xano's AI Coding Maturity Model provides a useful framework for understanding where your organization falls on this spectrum and where the gaps are.

Why this matters for security too

Security and compliance are not the same thing, but in this context, they are very much coworkers. A visual governance layer helps teams catch insecure data flows, logic flaws, and unsafe assumptions before release. It also supports safer collaboration by making backend behavior understandable to more than the one engineer who prompted the AI that day. Xano's platform language reflects this directly: visual validation helps teams review, validate, and refine AI-generated outputs; isolated environments keep risky changes from reaching production; and audit-friendly visibility makes logic easier to trust over time.

This is especially relevant as organizations incorporate AI into the SDLC while also meeting established security and privacy expectations. For teams operating in regulated or audit-heavy environments, that framing is important: generate code fast and have continuous governance along the way. For a deeper look at which backend platforms offer enterprise-grade security, Xano's comparison is worth a read.

Why Xano?

Xano fits this conversation best not as "another AI coding tool," but as the governance layer that makes AI-assisted backend development more operationally safe. AI can generate backend logic quickly, but Xano provides the visual validation, standardized patterns, human-in-the-loop review, isolated testing environments, and audit-friendly visibility needed to govern what gets shipped.

That is a useful position for application development leaders because it ties directly to outcomes they actually care about: faster delivery, less review bottlenecking, more consistent backend logic, stronger control visibility, and fewer ugly surprises during security review or compliance prep. Or said less politely: speed is great, but not if your audit readiness collapses the minute the AI starts being productive.

Ready to build a backend you can really see, audit, and govern? Schedule a demo to learn more about what Xano can do for your team.