AI is writing more code than ever—and trust in that code has never been lower.

GitHub reported that 46% of code in 2025 was AI-generated. Stack Overflow found that only 3% of developers "highly trust" AI output. We're producing enormous volumes of code that the people responsible for it don't feel confident about, and the analysts are sounding the alarm: Gartner predicts a 2,500% increase in software defects from AI by April 2028. Forrester warns that 75% of tech leaders will face severe technical debt from AI code.

We've spent the last two years optimizing for how fast AI can write code and how much it can produce. That was the right focus for the adoption phase. But we've entered a different phase now. The question isn't "Can AI write code?" It's "Can your organization govern what AI writes?"

Most organizations don't have a good answer. No shared framework exists for even talking about the problem. Every team is improvising—some logging AI output, some ignoring it, some building bespoke review processes that vary project to project. There's no common language for assessing where you are, where you need to be, or what the gaps look like.

That feels like a recipe for disaster.

The case for acting before the disaster

This pattern isn't new. Aviation after the Wright Brothers spread fast with no licensing, no air traffic control, no maintenance standards. Governance was individual judgment: pilots were expected to be careful. It took fatal, high-profile crashes before the industry built what eventually became the FAA.

Early internet security followed the same arc. In the late '90s, companies were putting systems online as fast as they could. Security was improvised. Some had firewalls. Some had policies on paper nobody followed. There was no way to benchmark your posture or identify gaps. That changed when frameworks like NIST and SOC 2 gave the industry a common language.

In both cases, the framework arrived after the damage was done. The warning signs for AI-generated code are already here—the defect projections, the trust gap, the technical debt forecasts. We all saw the headlines when AI-generated code caused outages at Amazon (you all had a lot to say about it, too). The point is: We all see this coming. So here’s my call to action to everyone reading this: Let’s build the governance framework proactively instead of waiting until a major incident makes it unavoidable.

What the model is

The AI Coding Maturity Model is a framework for assessing how well an organization governs AI-generated code and agent behavior. It doesn't measure how much AI you're using or how productive your developers are with it. It measures how much visibility and control you have over what AI is doing in your systems.

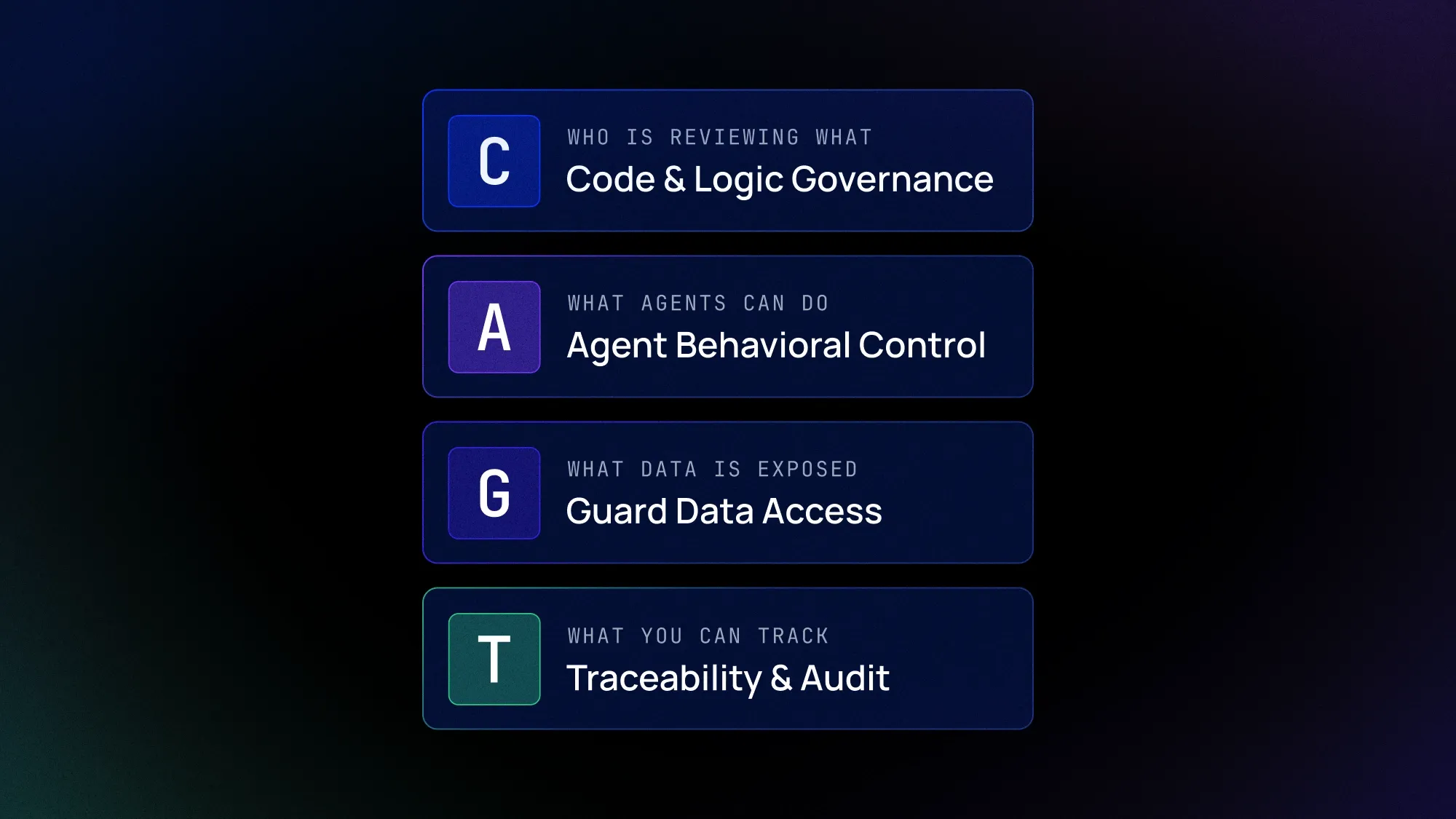

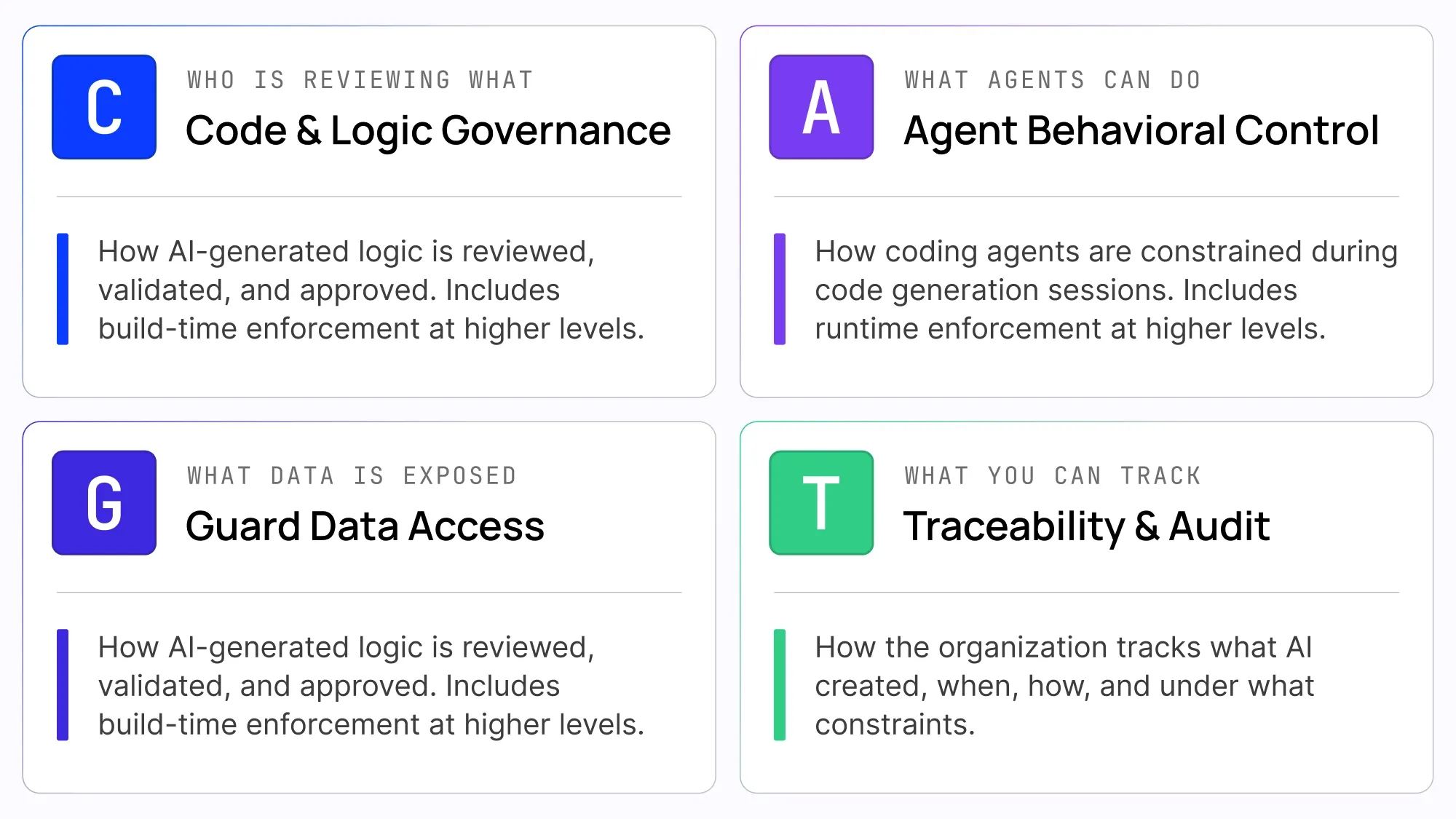

The model is organized around four governance domains. Each is scored on a 1–5 scale, from ad-hoc usage to optimized governance, producing a four-number score that gives a clear snapshot of where an organization stands.

Code and Logic Governance

This is the most intuitive one: how is AI-generated logic reviewed, validated, and approved before and after deployment? At the low end, AI generates code and it goes straight to production—no review gates, no distinction between AI-written and human-written code. At the high end, the platform itself enforces organizational rules automatically. Not "a human reviewer is supposed to catch violations," but "the system won't let violating code deploy." The progression here is from human-dependent process to platform-enforced policy.

Coding Agent Behavioral Control

This domain asks a question most organizations haven't considered: what boundaries exist on the AI tools themselves? When a coding agent—an AI assistant, an MCP client, an AI builder—is generating backend logic, what prevents it from modifying authentication flows, creating new external API endpoints, or introducing database operations it shouldn't? At the low end, agents operate without any defined boundaries. At the high end, the platform scopes agents to specific domains and data tables, and blocks generation that crosses those boundaries regardless of the developer's own permissions.

Guarded Data Access

AI-generated code accesses data. The question is whether it does so through the same permissions as the developer who prompted it, or through a governance layer that accounts for the unique risks of AI-generated logic paths. This domain covers data classification, differentiated access controls for AI logic, and rules governing cross-domain data flows—like preventing AI from combining PII with external API calls in the same execution path. At higher maturity levels, data access governance is enforced at the execution layer in real time, and access boundaries adapt to context.

Traceability and Audit

This one answers a deceptively simple question: What did AI do? Can you distinguish AI-generated code from human-written code? Can you trace a logic block back to the prompt that created it, the model version that generated it, and the organizational rules that were active at creation time? At the low end, there's no record at all. At the high end, traceability data is immutable and cryptographically verifiable, with a full AI Bill of Materials maintained automatically for every component.

Where most organizations are today

I’m curious to hear what you think, but based on my conversations with customers and partners, I believe the vast majority of organizations are operating at Level 1 or Level 2 across all four domains. At Level 1, there's essentially no governance—AI generates code and it ships with little to no distinction from human-written code, no agent boundaries, no differentiated data access controls, and no traceability. At Level 2, teams have started to acknowledge the problem and put some ad-hoc processes in place, but enforcement depends entirely on individuals following guidelines rather than systems enforcing policy.

This isn’t meant to be a criticism, and I’m not suggesting every organization needs to be at maturity level 5 tomorrow. Frankly, that’s not even possible; we don’t have the tools yet. But I am suggesting that every organization needs a way to assess where they stand—and a shared language for the conversation about where they need to go.

Like this take on the future of software development in the AI era? Get the latest posts straight in your inbox by subscribing to the Futureproof newsletter on LinkedIn.