I spend a lot of time talking to engineering teams, usually in the context of how they're building their backends, but increasingly about how AI is changing the way they work. Over the past year, I've noticed a pattern.

Engineering leaders tell me their teams are moving faster than ever. Output is up, they're shipping features in days that used to take weeks, and while they acknowledge AI-generated code carries risk, many feel rigorous testing can solve for it.

On the enterprise architecture side, the tone is more cautious. The EA concern is that AI is proliferating across the landscape faster than they can track, compromising the fundamental architectural structure of that landscape.

Both positions have truth to them. But I've come to believe both sides are missing the most important part of the problem, because it lives in the gap between them.

AI turned a slow problem into a fast one

When an AI coding tool generates backend logic, where does that logic come from? Does it reference the organization's canonical source of truth for a business rule? Or does it generate its own interpretation based on a prompt?

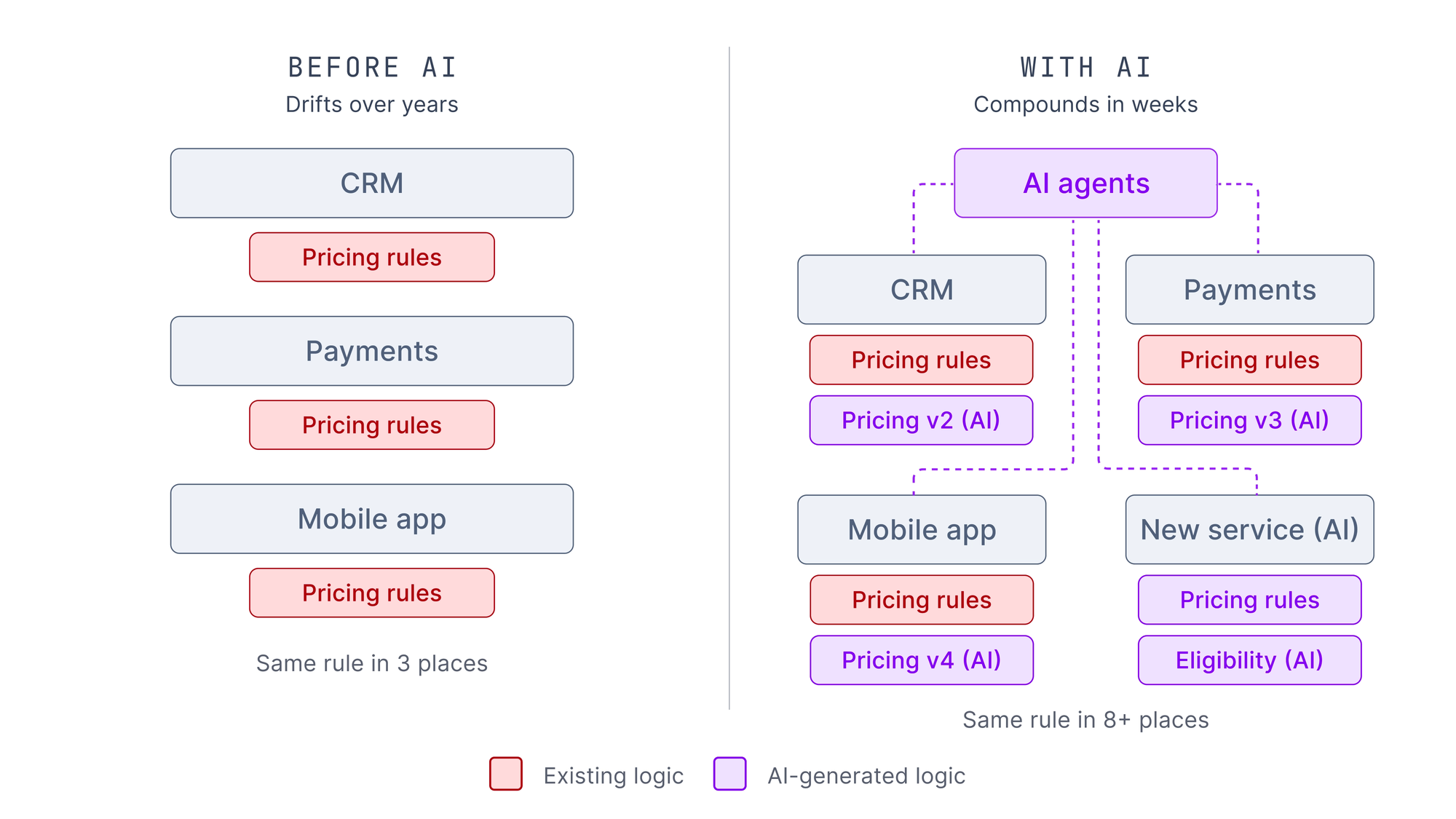

In almost every case, it's the latter—because in most organizations, there is no canonical source of truth. The AI generates its best version of the pricing logic, the eligibility check, the compliance rule, and deposits it wherever it's building. A new service gets its own copy, a new workflow gets its own version, a new integration implements its own interpretation.

The engineering leader sees faster output. The enterprise architect sees more AI tools on the landscape. Neither is naturally positioned to see that business logic is being scattered across the organization at a pace that's outrunning anyone's ability to track it. What used to drift over years now compounds in weeks.

Two layers, one blind spot

This is the problem that lives in the gap—and to understand why it's so hard to fix, you have to understand why neither side owns it.

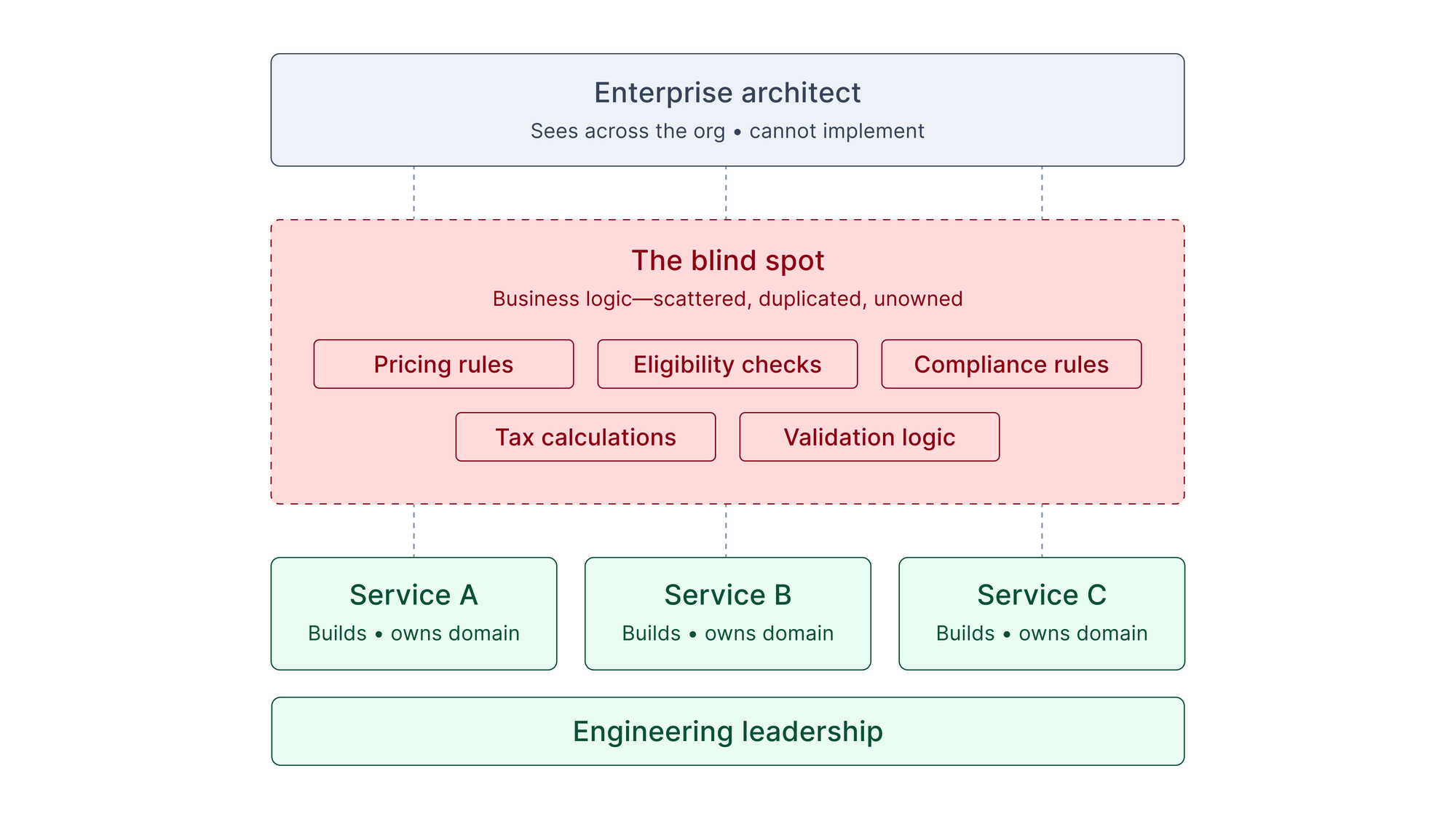

The EA governs the landscape: what platforms the organization uses, how systems integrate, what architectural standards teams follow. Engineering leaders govern what gets built: how teams ship software, what goes to production, how technical decisions get made. Both have a clear view of their own layer. But scattered business logic falls between them.

I've talked about this in previous Futureproof newsletters, and it comes to the forefront again here. In most enterprise engineering organizations, business logic—the rules that govern how the business actually operates—has no clear home. Pricing rules, eligibility checks, tax calculations, compliance requirements—duplicated across services, embedded in application code, owned by whoever happened to write them last.

Engineering leaders experience this as a maintenance burden: a regulation changes and the team spends two weeks hunting down every implementation. Enterprise architects experience it as an architectural gap: they know the logic is scattered, but it's never been part of their reference architecture.

The EA doesn't track where individual business rules live—too granular. The engineering leader owns their team's systems, not the cross-organizational question of whether the same eligibility check exists six different ways. For a long time, organizations absorbed this as business as usual. AI made it intolerable—creating a blind spot that used to take years to build up, but now takes weeks.

Why neither side can solve this alone

When either side tackles this independently, they reach for the tools they already have—and those tools aren't sufficient.

Enterprise architects look toward tighter governance: more review processes, stricter standards for which AI tools are approved. But if the underlying architecture allows business logic to be scattered, governing the tools doesn't fix anything.

Engineering leaders look toward refactoring: consolidating duplicated code. But this is scoped to their domain—they can fix logic sprawl within their systems, but can't mandate changes to how other teams handle the same rules.

The EA has cross-cutting visibility without implementation authority. The engineering leader has implementation authority without cross-cutting visibility.

The collaboration that AI demands

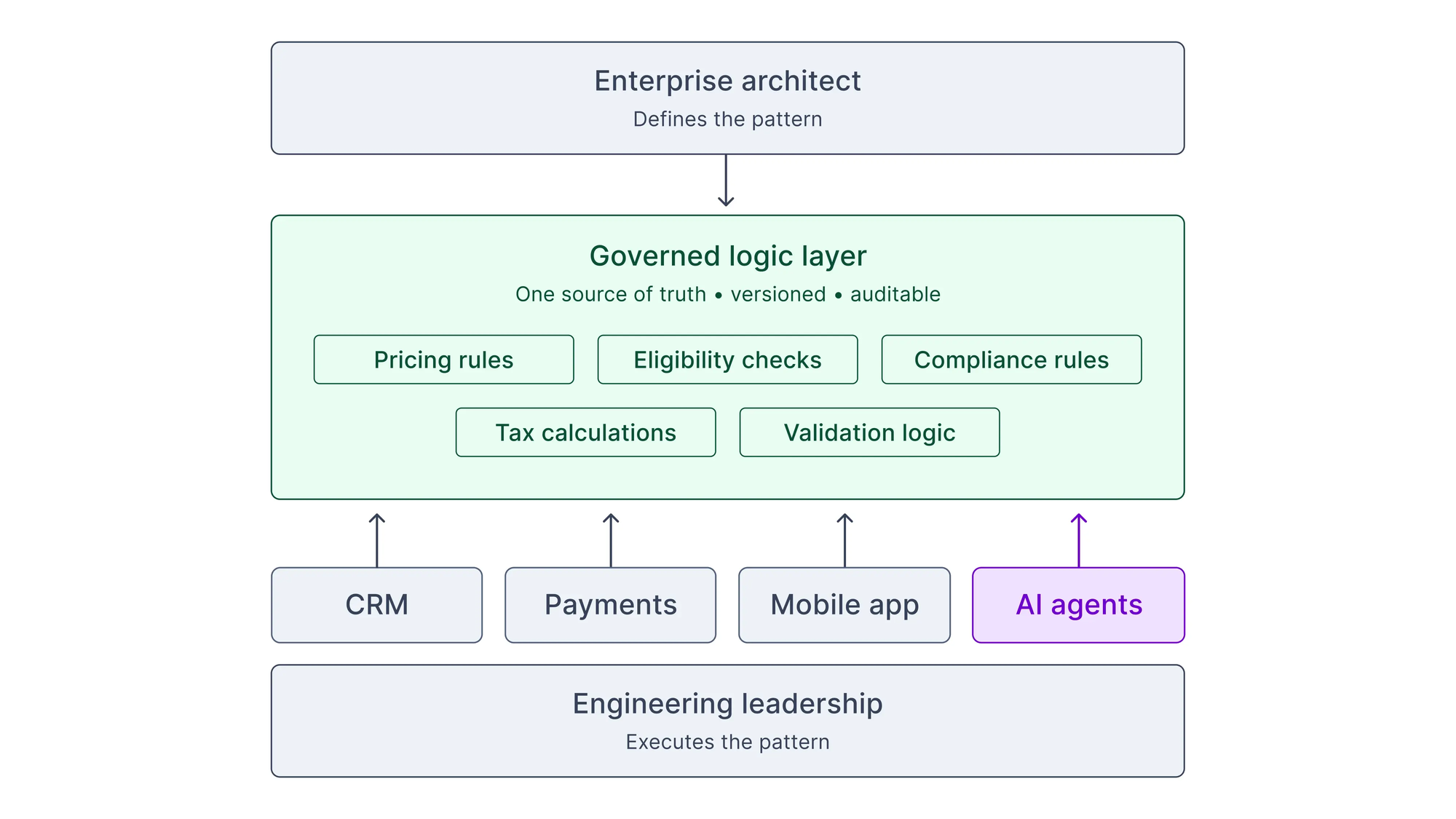

Enterprise architects and engineering leaders need to jointly own a principle that neither has traditionally owned alone: critical business logic must be architecturally centralized.

The EA's role is to establish this as an architectural standard—the same way an EA might mandate centralized authentication or an approved integration layer. Critical business rules must be governed through centralized, versioned services that other systems call rather than reimplement. The EA defines the pattern.

The engineering leader's role is to operationalize that principle. Extract scattered logic into governed services. Ensure AI coding tools reference centralized logic rather than generating their own copies. The engineering leader executes the pattern.

Together, they expose the blind spot. The EA ensures the principle is established across the organization. The engineering leader ensures it's real within their domain.

What I expect to see

I think this will sort organizations into two camps quickly.

The first camp will recognize early that AI exposed a structural gap and move to close it together—EAs who expanded their reference architectures to include logic centralization as a first-class principle, and engineering leaders who took that seriously enough to change how their teams build.

The second camp will look fine on the surface. The technology map will be current. The AI tools will be cataloged. But underneath, the logic layer will be incoherent—the same rules implemented dozens of different ways, no one able to say which version is authoritative. And when something changes—a regulation, a pricing model, a compliance requirement—the cost will hit all at once.

Like this take on the future of software development in the AI era? Get the latest posts straight in your inbox by subscribing to the Futureproof newsletter on LinkedIn.